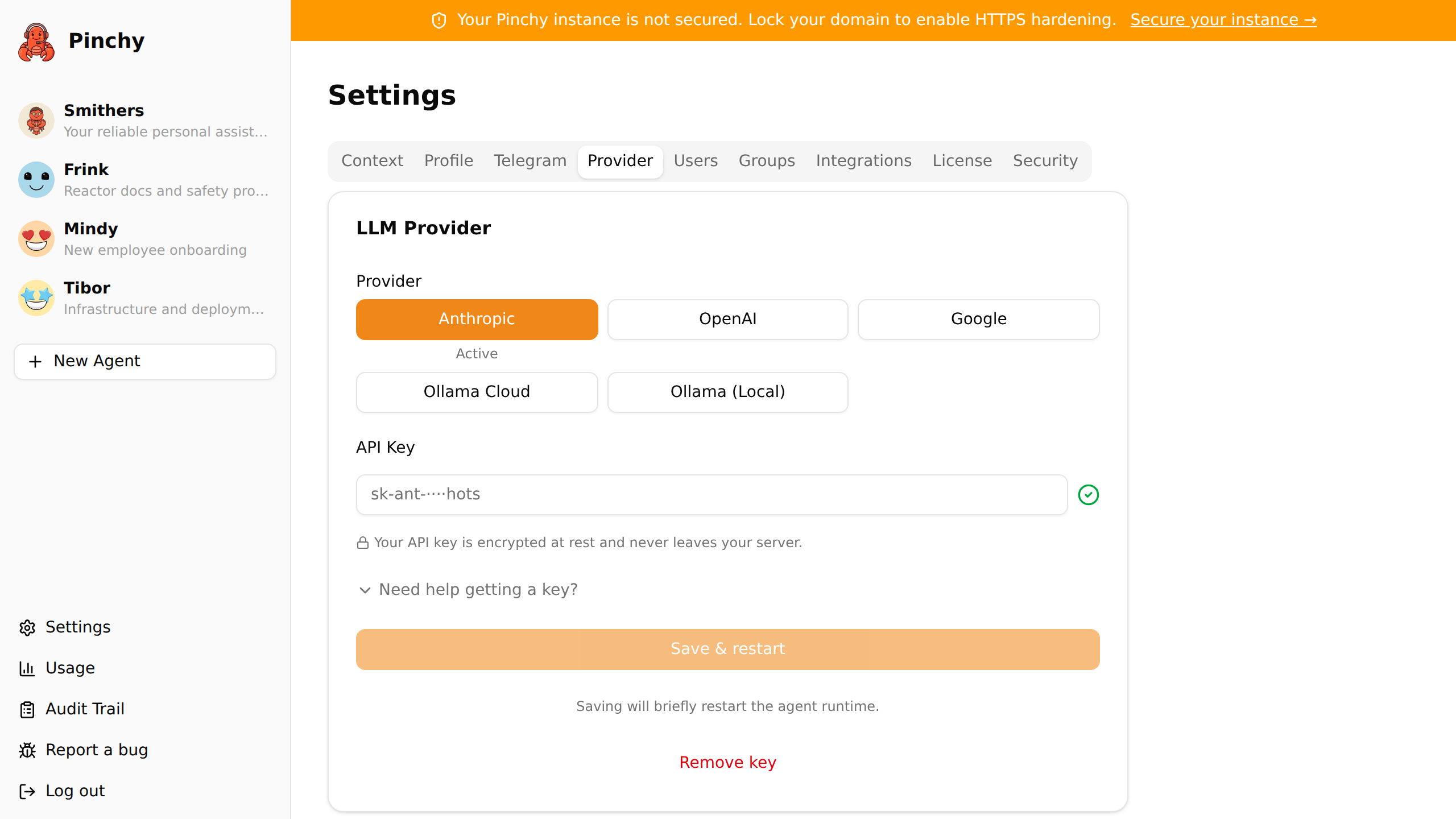

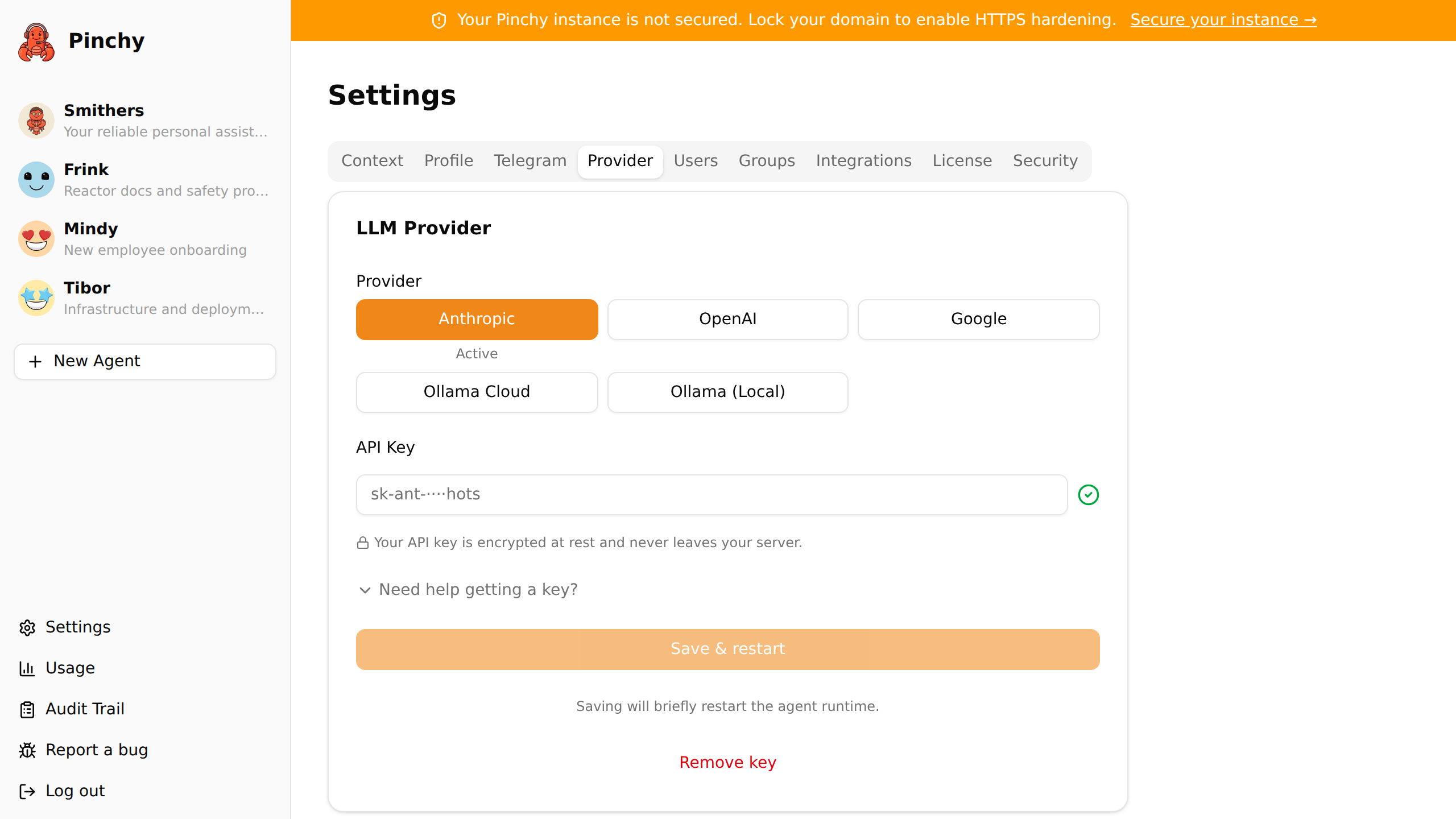

Integration

Run Pinchy agents against local Ollama for fully offline operation. Or against Ollama Cloud for EU-friendly managed models. Same provider, different deployment — switched per agent.

Self-hosting used to mean giving up capability. Frontier models were closed, local models were rough, the quality gap was real. That's changed. Modern open-weight models — Qwen, Llama, DeepSeek — are good enough for a large fraction of enterprise work, especially the inbox-type work Pinchy agents specialise in.

Pinchy supports Ollama as a first-class provider. You can wire up a local Ollama server and never talk to an external API again, or use Ollama Cloud for the convenience of managed hosting without leaving the Ollama ecosystem.

Two Deployments

Install Ollama on a server you control — a workstation, a GPU box in your datacentre, a dedicated VM. Pinchy connects via a base URL. No external calls. Suitable for air-gapped and regulated environments.

Managed hosting by Ollama with the same API contract. EU-friendly alternative to US frontier providers when you want managed convenience without the CLOUD Act overhead.

Mix and match. Sensitive finance agent → local Ollama. Customer-facing draft agent → Claude. Internal helper → Ollama Cloud. The provider is a property of the agent, not the installation.

For EU companies navigating the new EU Cloud Act and GDPR reality, Ollama — especially local Ollama — is the cleanest story. No US-based cloud provider in the loop, no transatlantic data transfer, no CLOUD Act exposure. The architecture is compliant by construction, not by DPA.

Pair that with Pinchy's self-hosted deployment, the audit trail, and scoped permissions, and you have a stack that can reasonably be deployed in banking, healthcare, legal, and government environments.

Learn More